Getting A Local Mastodon Setup In Docker

This is the first in probably a series of posts as I dig into the technical aspects of mastodon. My goal is to get a better understanding of the design of ActivityPub and how mastodon itself is designed to use ActivityPub. Eventually I want to learn enough to maybe do some hacking and create some of the experiences I want that mastodon doesn’t support today.

The first milestone is just getting a mastodon instance set up on my laptop. I’m gonna give some background and context. If you want to skip straight to the meat of things, here’s an anchor link.

Some background

Mastodon is a complex application with lots of moving parts. For now, all I want is to get something running so I can poke at it. Docker should be a great tool for this. Because a lot of that complexity can be packaged up in pre-built images. I tried several times using using the official docs and various other alternative projects to get a working mastodon instance in docker. But I kept running into problems that were hard to understand and harder to resolve.

I have a lot to learn about all the various pieces of mastodon and how they fit together. But I understand docker pretty well. So after some experimenting, I was able to get an instance running on my own. The rest of this post will be dedicated to explaining what I did and what I learned along the way.

One final note. I know many folks work hard to write docs and provide an out of the box dev experience that works. This isn’t meant to dismiss that hard work. It just didn’t work for me. I’m certainly going to share this experience with the mastodon team. Hopefully these lessons can make the experience better for others in the future.

The approach

Here’s the outline of what we’re doing.

We’re going to use a modified version of the docker-compose.yml that comes in the official mastodon repo. It doesn’t work out of the box. So I had to make some heavy tweaks. As of this writing, the mastodon docs seem to want people to use an alternate setup based on Dev Containers. I found that very confusing, and it didn’t work for me at all.

Once we have all of the docker images we need, all of the headaches are in configuring them to work together. Most of mastodon is a ruby on rails app with a database. But there is also a node app to handle streaming updates, redis for caching and background jobs, and we need to handle file storage. We will do the minimum configuration to get all of that set up and able to talk to each other.

There is also support for sending emails and optional search capabilities. These are not required just to get something working, so we’ll ignore them for now. It’s also worth noting that if we want to develop code in mastodon, we need to put our rails app in development mode. That introduces another layer of headaches and errors that I haven’t figured out yet. So that will be a later milestone. For now, all of this will be in “production” mode by default. That’s how the docker image comes packaged. Keep it simple.

There are still many assumptions here. I am running on Mac OS with Apple Silicon (M3). If you’re trying this out, you may run into different issues depending your environment.

Pre-requisites

We need docker. And a relatively new version. The first thing I did was ditch the version: 3 specifier in the docker-compose.yml. Using versions in these files is deprecated, and we can use some newer features of docker compose. I have v4.30.0 of Docker Desktop for Mac.

We also need caddy. Mastodon instances require a domain in most cases. This is mostly about identity and security. It would be bad if an actor on mastodon could change their identity very easily just by pretending to be a different domain or account. There are ways around this, but I couldn’t get any of them to work for me. That complicates our setup. Because we can’t just use localhost in the browser. We need a domain, which means we also need HTTPS support. Modern browsers require it by default unless you jump through a bunch of hoops.

Caddy gives us all of that out of the box really easily. It will be the only thing running outside of docker. There’s only one caveat with caddy. The way that it is able to do ssl termination so easily is that it creates its own certificates on the fly. The way it does this is by installing it’s own root cert on your machine. You’ll have to give it permission by putting in your laptop password the first time you run caddy.

If that makes you nervous, feel free to skip this and use whatever solution you’re comfortable with for SSL termination. But as far as I know, you need this part.

Choose a domain for your local instance. For me it was polotek-social.local. Something that mkes it obvious that this is not a real online instance. Add an entry to your /etc/hosts and point this to localhost. Or whatever people have to do on Windows these days.

Let’s run a mastodon

I put all of my changes in my fork of the official mastodon repo. You can clone this branch and follow along. All of the commands assume you are in the root directory of the cloned repo. https://github.com/polotek/mastodon/tree/polotek-docker-build

> git clone git@github.com:polotek/mastodon.git

> cd mastodon

> git co -b polotek-docker-build

I rewrote the docker section of the README.md to outline the new instructions. I’m going to walk through my explanation of the changes.

Pull docker images

This is the easiest part. All of the docker images are prepackaged. Even the rails app. You can use the docker compose command to pull them all. It’ll take a minute or 2.

> docker compose pull

Setup config files

We’re using a couple of config files. The repo comes with .env.production.sample. This is a nice way to outline the minimum configuration that is required. You can copy that to .env.production and everything is already set up to look for that file. The only thing you have to do here is update the LOCAL_DOMAIN field. This should be the same as the domain you chose and put in your /etc/hosts.

You can put all of your configuration in this file. But I found it more convenient to separate out the various secrets. These often need to be changed or regenerated. I wrote a script to make that repeatable. Any secrets go in .env.secrets. We’ll come back to how you get those values in a bit.

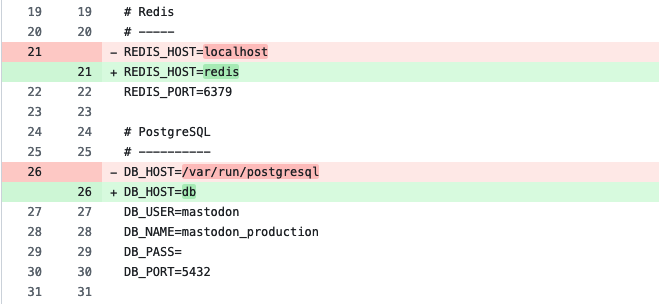

I had to make some other fixes here. Because we’re using docker, we need to update how the rails app finds the other dependencies. The default values seem to assume that redis and postgres are reachable locally on the same machine. I had to change those values to match the docker setup. The REDIS_HOST is redis, and the DB_HOST is db. Because that’s what they are named in the docker-compose file.

Diff of config file on github

The rest of the changes are just disabling non-essential services like elastic search and s3 storage.

Generate secrets

We need just a handful of config fields that are randomly generated and considered sensitive. Rails makes it easy to generate secrets. But run the required commands through docker and getting them in the right place is left as an exercise for the reader. I added a small script that runs these commands and outputs the right fields.

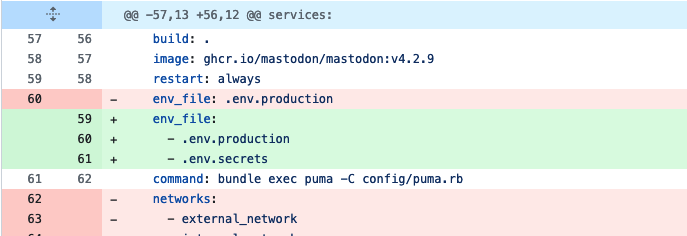

Rather than try to edit the .env.production file in the right places everytime secrets get regenerated, I think it’s much easier to have them in a separate file. Fortunately, docker-compose allows us to specify multiple files to fill out the environment variables.

Diff of config file on github

This was a nice quality of life change. And now regenerated secrets and making them available is just one command.

> bin/gen_secrets > .env.secrets

Any additional secrets can be added by just updating this script. For example, I use 1password to store lots of things, even for development. And I can pull things out using their cli named op. Here’s how I configured the email secrets with the credentials from my mailgun account.

# Email

echo SMTP_LOGIN=$(op read "op://Dev/Mailgun SMTP/username")

echo SMTP_PASSWORD=$(op read "op://Dev/Mailgun SMTP/password")

Run the database

Running the database is easy.

> docker compose up db -d

You’ll need to have your database running while you run these next steps. The -d flag will run it in the background so you can get your terminal back. I often prefer to skip the -d and run multiple terminal windows. That way I can know at a glance if something is running or not. But do whatever feels good.

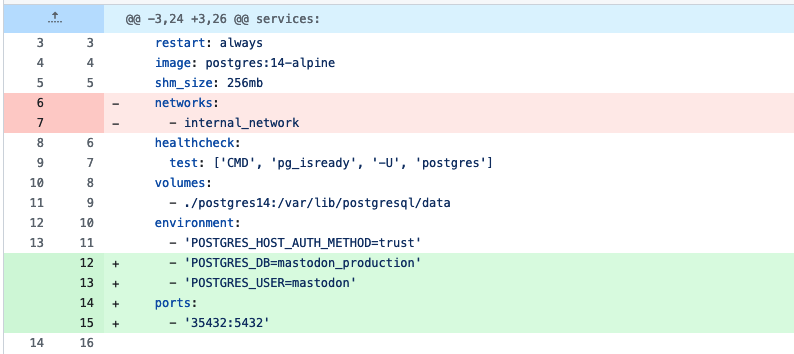

The only note here is to explain another small change to docker-compose to get this running. We’re using a docker image that comes ready to run postgres. This is great because it removes a lot of the fuss of running a database. The image also provides some convenient ways to configure the name of the database and the primary user account.

This becomes important because maston preconfigures these values for rails. We can see this in the default .env.production values.

DB_USER=mastodon

DB_NAME=mastodon_production

The database name is not a big issue. Rails will create a database with that name if it doesn’t exist.

But it will not create the user (maybe there’s a non-standard flag you can set?). We have to make sure postgres already recognizes a user with the name mastodon.

That’s easy enough to do by passing these as environment variables to the database container only.

Diff of config file on github

Load the database schema

One thing that’s always a pain when running rails in docker. Rails won’t start successfully until you load the schema into the database and seed it with the minimal data. This is easy to do if you can run the rake tasks locally. You can’t run the rake tasks until you have a properly configured rails. And it’s hard to figure out if your rails is configured properly because it won’t run without the database.

I don’t know what this is supposed to look like to a seasoned rails expert. But for me it’s always a matter of getting the db:setup rake task to run successfully at least once. After that, everything else starts making sense.

However, how do you get this to work in our docker setup? We can’t just do docker compose up, because the rails container will fail. We can’t use docker compose exec because that expects to attach to an existing instance. So the best thing to do is run a one-off container that only runs the rake task. The way to achieve that with docker compose is docker compose run --rm. The rm flags just makes sure the container gets trashed afterwards. Because we’re running our own command instead of the default one, we don’t want it hanging around and potentially muddying the waters. Once we know the magic incantation, we can setup the database.

> docker compose run --rm web bundle exec rails db:setup

Note: Usually you don’t put quotes around the whole command. For some reason, this can cause problems in certain cases. You can put quotes around any individual arguments if you need to.

Run rails and sidekiq

If you’ve gotten through all of the steps above, you’re ready to run the whole shebang.

> docker compose up

This will start all of the other necessary containers, including rails and sidekiq. Everything should be able to recognize and connect to postgres and redis. We’re in the home stretch.

But if you try to reach rails directly in your browser by going to https://localhost:3000, you’ll get this cryptic error.

ERROR -- : [ActionDispatch::HostAuthorization::DefaultResponseApp] Blocked hosts: localhost:3000

It took me a while to track this down. It’s a nice security feature built into rails. When running in production, you need to configure a whitelist of domains that rails will run under. If it receives request headers that don’t match those domains, it produces this error. This prevents certain attacks like dns rebinding. (Which I also learned about at the same time)

If you set RAILS_ENV=development, then localhost is added to the whitelist by default. That’s convenient, and what we would expect from dev mode. But remember we’re not running in development mode quite yet. So this is a problem for us.

The nice thing is that mastodon has added a domain to the whitelist already. Whatever value you put in the LOCAL_DOMAIN field is recognized by rails. (In fact, if you just set this to localhost you might be good to go. Shoutout to Ben.) However, when you use an actual domain, then most modern web browsers force you to use HTTPS. This is another generally nice security feature that is getting in our way right now. So we need a way to use our LOCAL_DOMAIN, terminate SSL, and then proxy the request to the rails server running inside docker.

That brings us to the last piece of the puzzle. Running caddy outside of docker.

Run a reverse proxy

The configuration for caddy is very basic. We put in our domain, we put in two reverse proxy entries. One for rails and one for the streaming server provided by node.js. Assuming you don’t need anything fancy, caddy provides SSL termination out of the box with no additional configuration.

# Caddyfile

polotek-social.local

reverse_proxy :3000

reverse_proxy /api/v1/streaming/* :4000

We put this in a file named Caddyfile in the root of our mastodon project, then in a new terminal window, start caddy.

> caddy run

Success?

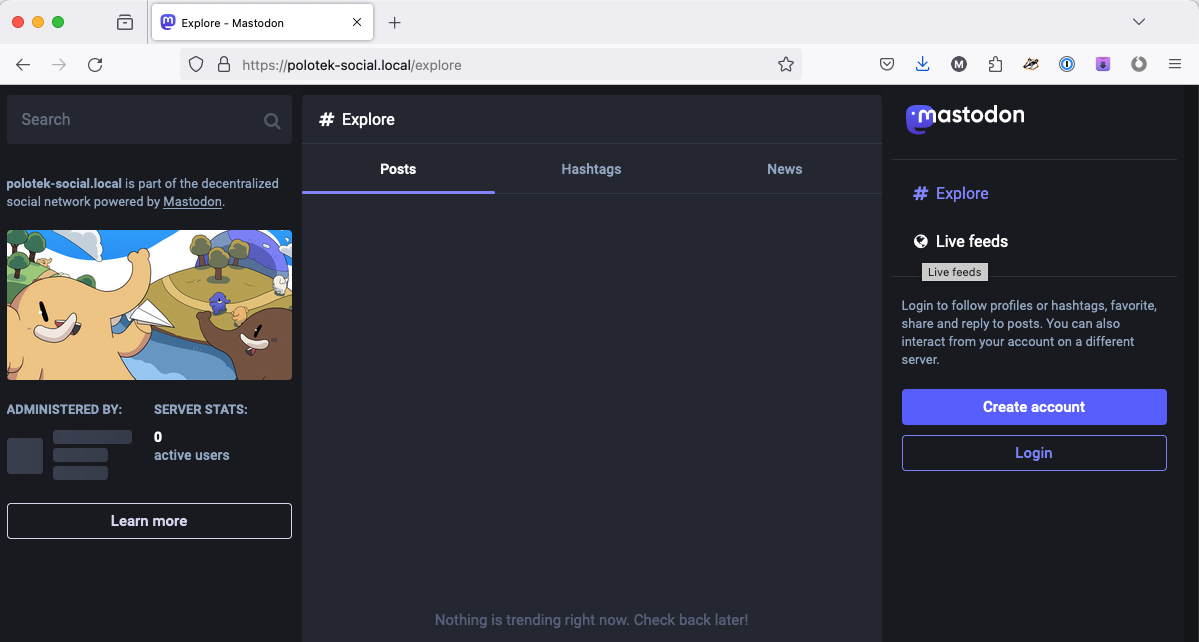

If everything has gone as planned, you should be able to put your local mastodon domain in your browser and see the frontpage of mastodon!

Mastodon frontpage running under local domain!

In the future, I’ll be looking at how to get actual accounts set up and how to see what we can see under the hood of mastodon. I’m sure I’ll work to make all of this more developement friendly to work with. But I learned a lot about mastodon just by getting this to run. I hope some of these changes can be contributed back to the main project in the future. Or at least serve as lessons that can be incorporated. I’d like to see it be easier for more people to get mastodon set up and start poking around.